Wolfram Language Paclet Repository

Community-contributed installable additions to the Wolfram Language

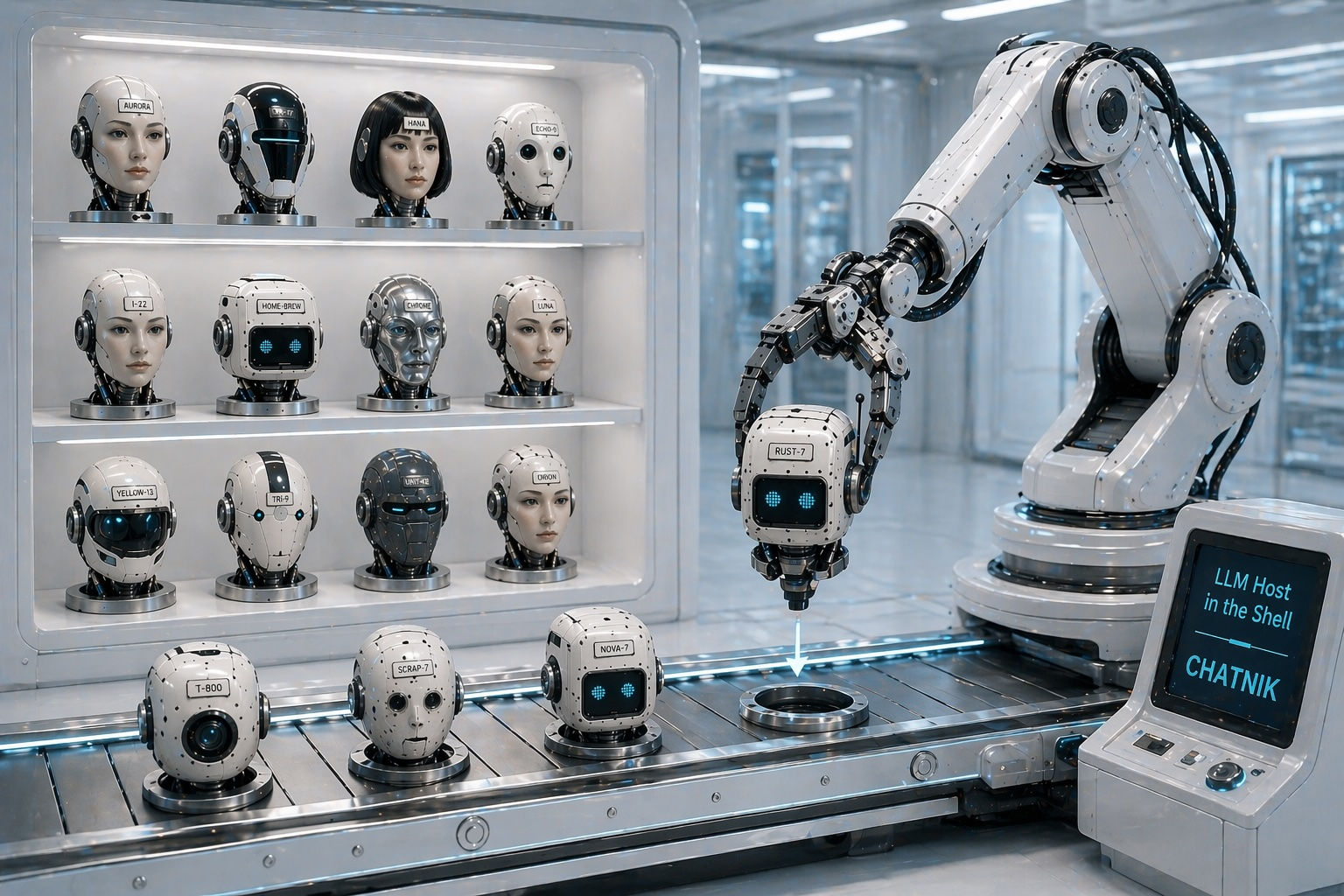

CLI scripts for conversing with persistent LLM personas

Contributed by: Anton Antonov

Command Line Interface (CLI) scripts to manage and interact with multiple, persistent Large Language Model (LLM) chat objects. Access to repository prompts is provided via automatic prompt expansion. Access to chat object elements (history, models, etc.) facilitates the creation of useful CLI pipelines.

To install this paclet in your Wolfram Language environment,

evaluate this code:

PacletInstall["AntonAntonov/Chatnik"]

To load the code after installation, evaluate this code:

Needs["AntonAntonov`Chatnik`"]

The script "LLMChat" is used to interact with the chat objects. Create and chat with an LLM persona named "yoda1" (using the Yoda chat persona):

LLMChat 'hi, who are you?' --i=yoda1 --prompt=@Yoda # Hmmm. Yoda, I am. Jedi Master, wise and old. Much to teach, I have. Yes, hmmm.Continue the conversation with "yoda1":

LLMChat 'since when do you use a green light saber?' --chat-id=yoda1 # Green, my lightsaber is, yes. Symbol of a Jedi Consular, it is. Wisdom and harmony, it represents. Since my training as a Jedi, I have wielded it. A sign of my connection with the Force, green blades are. Hmm. Use it wisely, I do.Execute a chat object management command using the script LLMChatMeta -- view the whole interaction with "yoda1":

LLMChatMeta full-text --chat-id=yoda1Clear the messages of "yoda1":

LLMChatMeta clear --chat-id=yoda1Copy paclet's scripts to a directory that is already in shell's PATH variable. For example, "~/.local/bin":

On MacOSX if no directory is specified for ChatnikCopyScripts (i.e the directory is Automatic) then paclet's scripts are copied in the "~/Applications" directory:

Longer CLI pipelines make "Chatnik" very useful. For example:

cat README.md | LLMChat - --prompt=@Summarize && LLMChat "&Translate|Russian^"The script "LLMChatMeta" is used to manage the chat objects. Here is its usage message:

LLMChatMeta --help # Chat with persistent LLM-chat objects. # # * Mandatory positional arguments: # NAME DOCUMENTATION # command Command, one of: card, clear, context, delete, file, first-message, last-message, list, load-llm-personas, message, messages. # # * Optional arguments (must be passed as --name=... in any order): # NAME DEFAULT DOCUMENTATION # chat-id Chat ID. # all false Whether to apply the command to all chat objects or not. # n -Infinity Messages to clear. (For 'clear' and 'messages' only.) # index -1 Message index. (For 'message' only) # format Format of the result. (For 'list' and 'context' only.)LLMChatMeta list --format=json # { # "yoda1":{ # "ChatID":"aa02f6c7-9223-4ebe-b216-607253b09fa7", # "Messages":7, # "LLMConfiguration":{ # "Service":"OpenAI", # "Name":"gpt-4.1-mini" # }, # "Usage":"274 tokens" # } # }Wolfram Language Version 14.3