Details and Options

The Tucker decomposition is also known as the higher-order singular value decomposition (HOSVD).

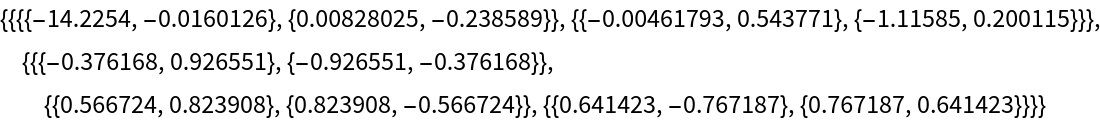

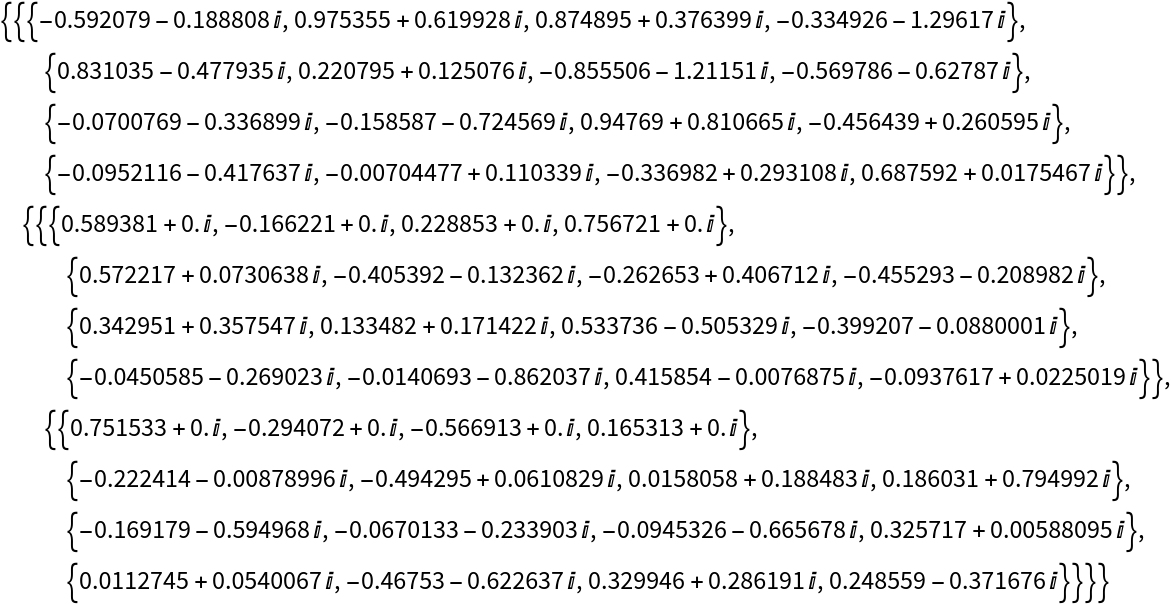

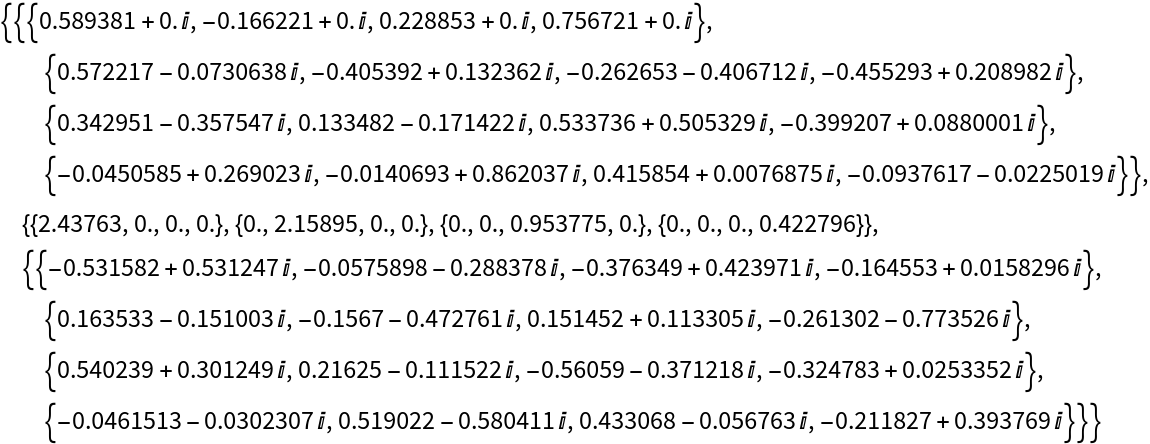

The Tucker decomposition expresses a tensor as the multilinear product of a "core tensor" and a set of unitary factor matrices.

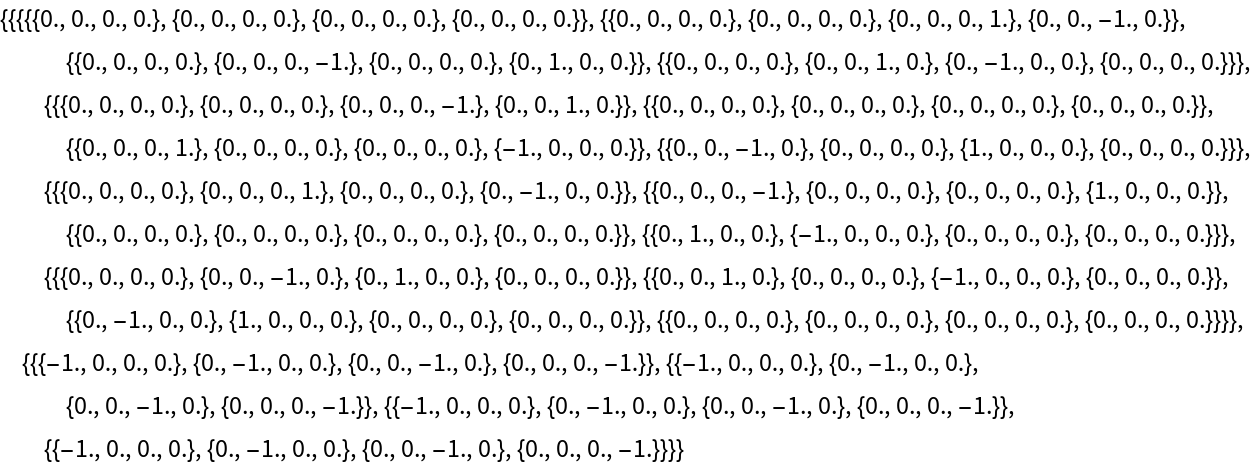

ResourceFunction["TuckerDecomposition"] computes the Tucker decomposition of an input tensor by iteratively applying the singular value decomposition (SVD) to a series of matrices obtained by flattening a tensor along its dimensions.

ResourceFunction["TuckerDecomposition"] internally computes an effective precision for a tensor and ensures that it has a depth of at least 2. If a tensor depth is less than 2, it returns an input tensor as a core and an empty list of factors.

rank can be specified as a list or as a single value, which is then expanded to a list with the same length as an input tensor's depth. If a rank is set to

Infinity, the function will not truncate any singular values.

The

Tolerance option is used to truncate singular values across each dimension of the tensor.

The function iteratively applies SVD to a tensor, keeping singular vectors corresponding to non-zero singular values (determined by

Tolerance), and updating a tensor by multiplying it with a transpose of singular vectors.

If the output core tensor has dimensions {c1,c2,…,cN}={min(d1,r1),min(d2,r2),…,min(dN,rN)} with di being the dimensions of an input tensor and the ri are provided or computed ranks along each dimension, then the factor matrices have corresponding dimensions {{d1,c1},{d2,c2},…{dN,cN}}.