Details and Options

TensorTrainDecomposition decomposes a numerical array of any rank into a Tensor Train (also known as a Matrix Product State) representation.

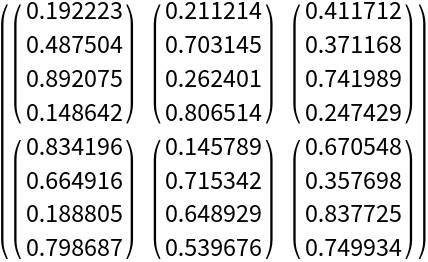

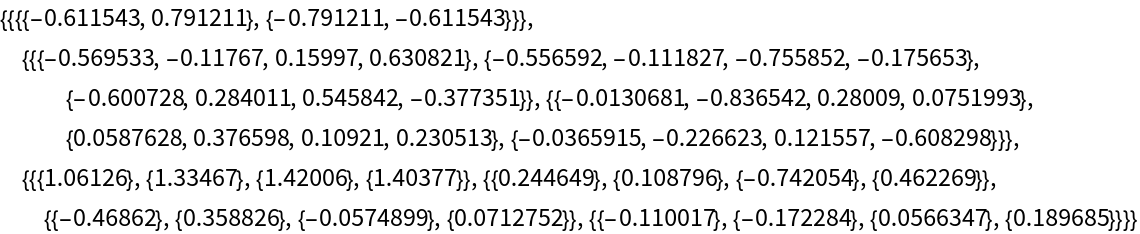

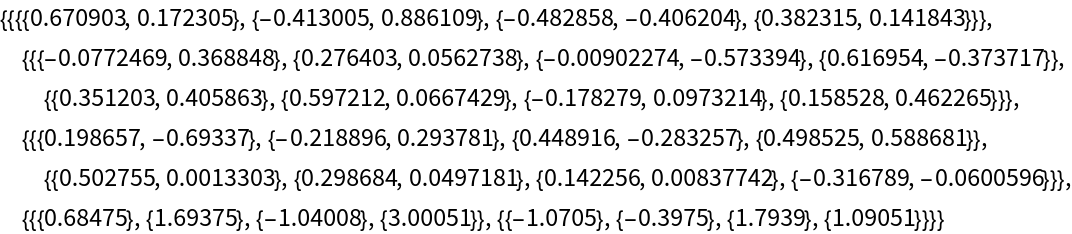

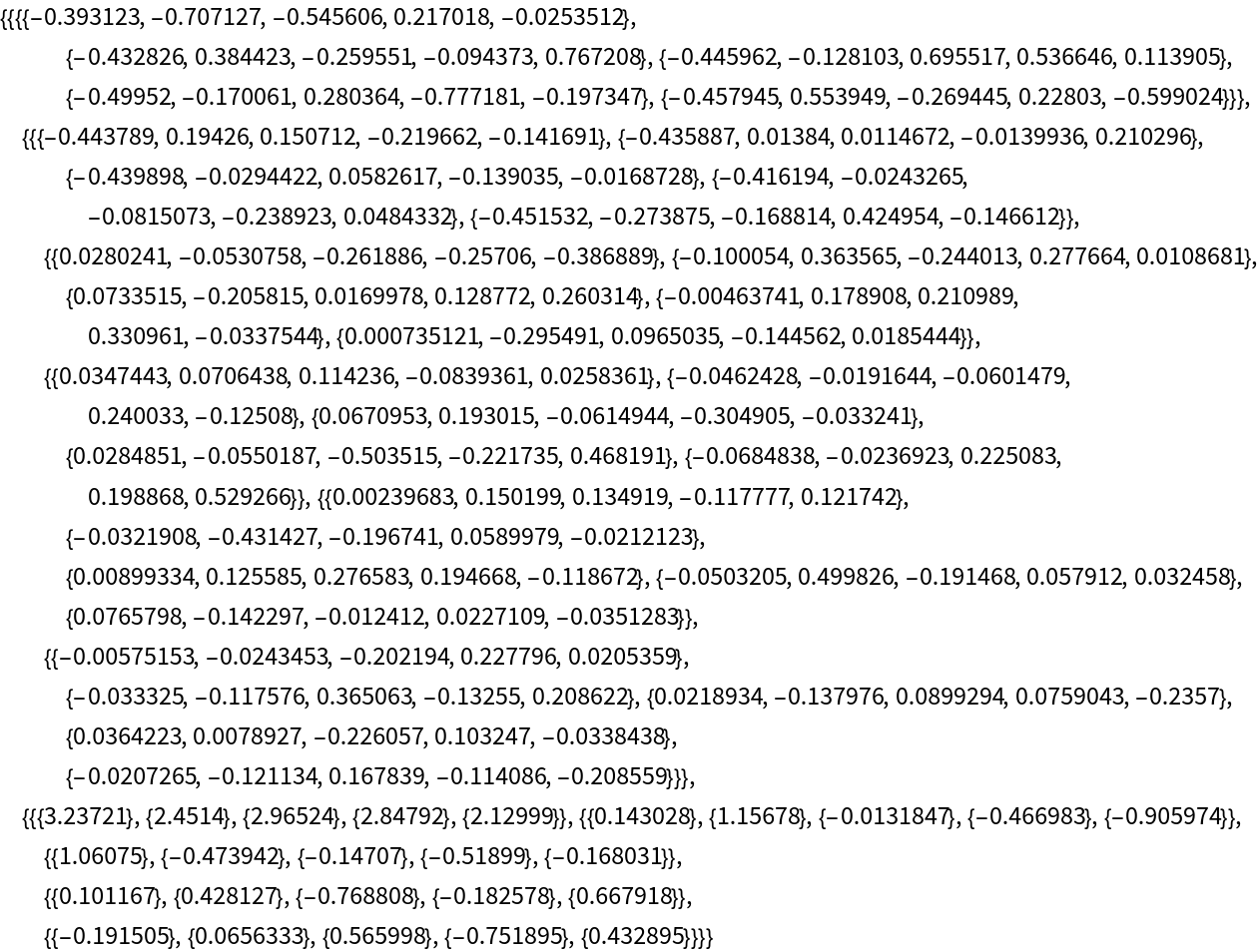

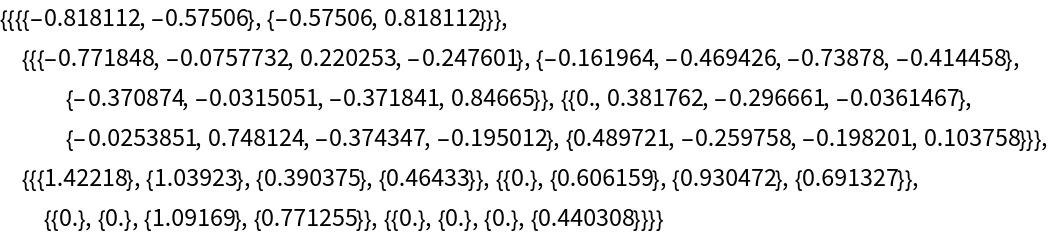

The result is a list of rank-3 arrays {A1,A2…,An} where n is the number of dimensions of the input tensor.

The bond dimension refers to the size of the internal contracted indices connecting adjacent tensor cores. It effectively dictates the rank and compression level of the Tensor Train.

Each core Ak has dimensions {χk-1,dk,χk} where dk is the size of the kth dimension of the input tensor, and χk is the bond dimension between cores k and k+1.

The first core has χ0=1 and the last core has χn=1.

TensorTrainDecomposition accepts the following options:

| Method | "SVD" | decomposition method |

| "MaxBondDimension" | Infinity | maximum bond dimension χ at each core |

| Tolerance | 0 | truncation threshold  |

With

Method → "QR", the function ignores "MaxBondDimension" and

Tolerance and returns the exact decomposition.

QRDecomposition does not provide singular values, so truncation is not available.

With the default settings, TensorTrainDecomposition returns an exact decomposition with no truncation.

Setting only "MaxBondDimension" controls memory: bond dimensions are capped at the specified value.

Setting only

Tolerance controls accuracy: bond dimensions adapt automatically to meet the error target.

With

Method → "SVD", singular values are discarded until the sum of the squared discarded values reaches

ε2, where

ε is the value specified by

Tolerance.

![AbsoluteTiming[

ttSlow = ResourceFunction["TensorTrainDecomposition"]@exactTensor;

]](https://www.wolframcloud.com/obj/resourcesystem/images/980/980ec590-2ab8-4480-b55f-c76aedd421d7/26813ac7f97d76c6.png)

![AbsoluteTiming[

ttFast = ResourceFunction["TensorTrainDecomposition"]@N@exactTensor;

]](https://www.wolframcloud.com/obj/resourcesystem/images/980/980ec590-2ab8-4480-b55f-c76aedd421d7/1af352ba6e156231.png)