# ```json

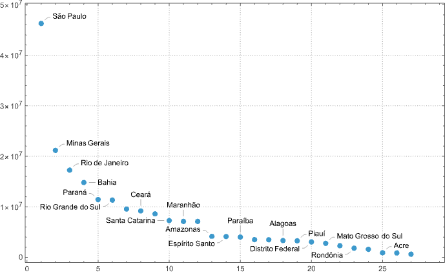

# {

# "Acre": 906876,

# "Alagoas": 3351543,

# "Amapá": 861773,

# "Amazonas": 4269603,

# "Bahia": 14812617,

# "Ceará": 9187103,

# "Distrito Federal": 3015268,

# "Espírito Santo": 4064052,

# "Goiás": 7294056,

# "Maranhão": 7075181,

# "Mato Grosso": 3526220,

# "Mato Grosso do Sul": 2778986,

# "Minas Gerais": 21168791,

# "Pará": 8602865,

# "Paraíba": 4039277,

# "Paraná": 11433957,

# "Pernambuco": 9557071,

# "Piauí": 3273227,

# "Rio de Janeiro": 17463349,

# "Rio Grande do Norte": 3506853,

# "Rio Grande do Sul": 11329605,

# "Rondônia": 1820329,

# "Roraima": 631181,

# "Santa Catarina": 7660443,

# "São Paulo": 46289333,

# "Sergipe": 2298696,

# "Tocantins": 1590248

# }

# ```