Released in 2016 by Yahoo, this net is a binary classifier (safe/not safe) fine-tuned from the pre-trained ResNet-50 model. It determines whether an image is not suitable/safe for work (NSFW), due to the presence of nudity and/or pornographic content. The original model was chosen to provide a good tradeoff between accuracy and computational weight.

Number of layers: 177 |

Parameter count: 5,944,514 |

Trained size: 24 MB |

Examples

Resource retrieval

Get the pre-trained net:

Basic usage

Apply the trained net to an input image:

Obtain the probabilities for each class for two images:

Net information

Inspect the number of parameters of all arrays in the net:

Obtain the total number of parameters:

Obtain the layer type counts:

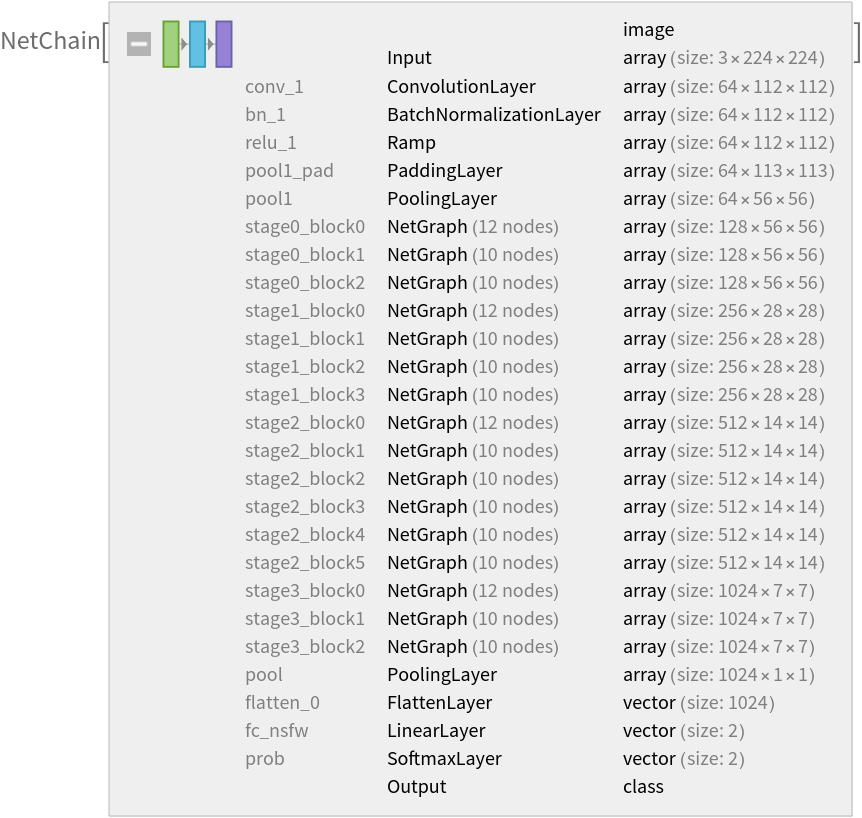

Display the summary graphic:

Export to MXNet

Export the net into a format that can be opened in MXNet:

Export also creates a net.params file containing parameters:

Get the size of the parameter file:

The size is similar to the byte count of the resource object:

Requirements

Wolfram Language

11.2

(September 2017)

or above

External Links

Resource History

Reference

![(* Evaluate this cell to get the example input *) CloudGet["https://www.wolframcloud.com/obj/479791ef-28d2-4c7e-b097-3646cb064a04"]](https://www.wolframcloud.com/obj/resourcesystem/images/a14/a144f11f-3522-4db8-a3c3-ae0621ed1eae/5cb3596ff4567173.png)

![(* Evaluate this cell to get the example input *) CloudGet["https://www.wolframcloud.com/obj/a556f73d-df3e-42d8-9e31-ccb1da45389a"]](https://www.wolframcloud.com/obj/resourcesystem/images/a14/a144f11f-3522-4db8-a3c3-ae0621ed1eae/7a457da0245142a0.png)