SSD Feature Pyramid Nets

Trained on

MS-COCO Data

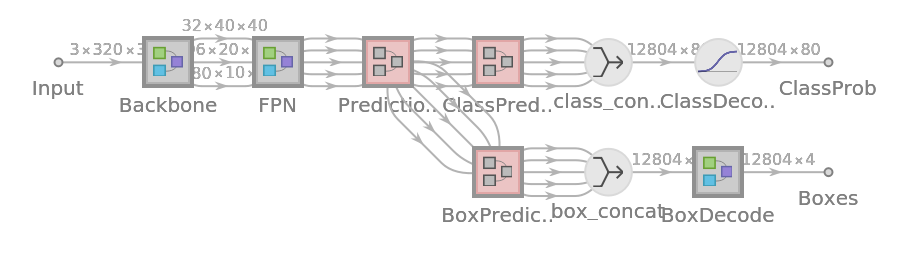

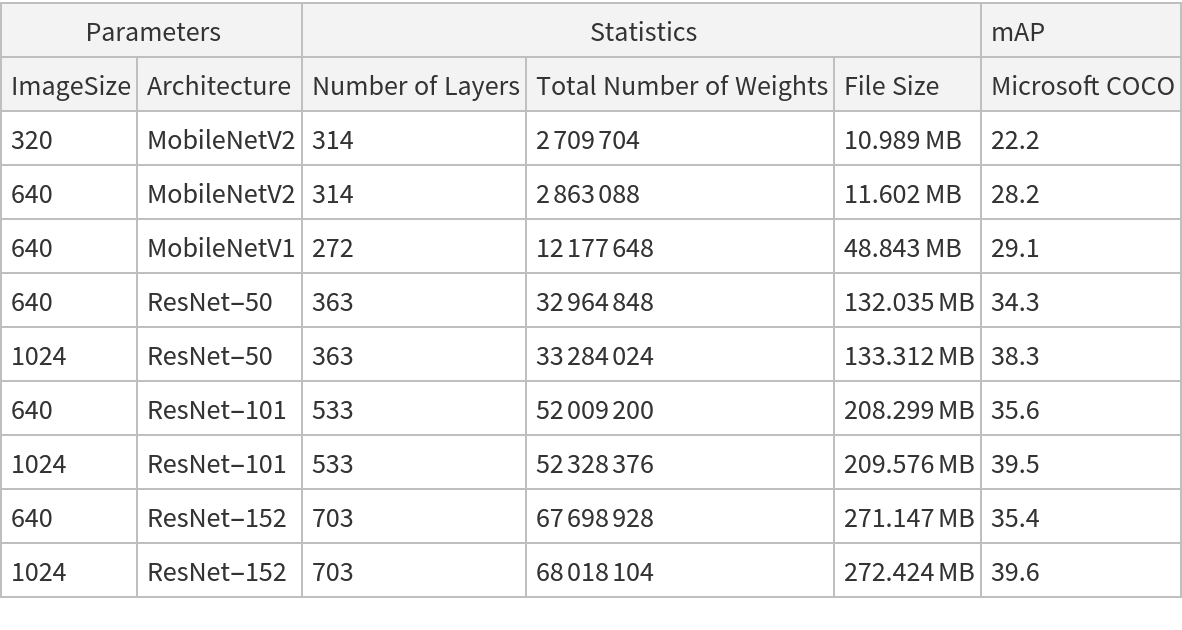

This family of object detection models introduces a feature pyramid network (FPN) to effectively recognize objects at different scales. To make lower-level feature maps semantically stronger, FPN employ top-down pathways to upsample higher resolution features, which are then merged with features from bottom-up pathway via lateral connections. All models inside the object detection family have separate modules for box and class predictions except for MobileNetV2 models, which share some computations before making the final predictions. In addition, all models had been trained using "Focal Loss," which addresses the imbalance between foreground and background classes that arises within single-stage detectors.

Examples

Resource retrieval

Get the pre-trained net:

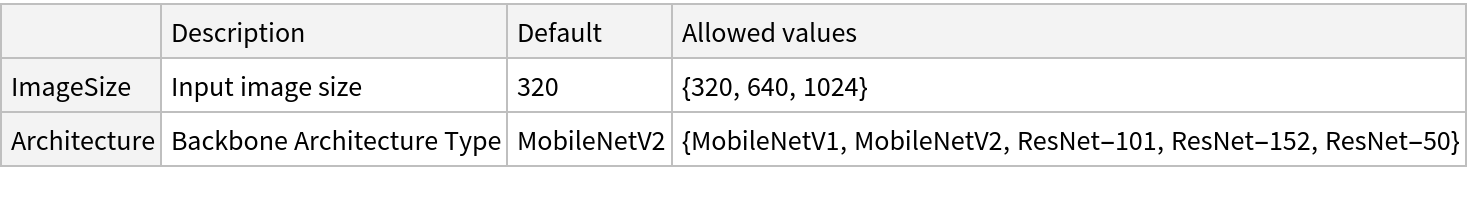

NetModel parameters

This model consists of a family of individual nets, each identified by a specific parameter combination. Inspect the available parameters:

Pick a non-default net by specifying the parameters:

Pick a non-default uninitialized net:

Evaluation function

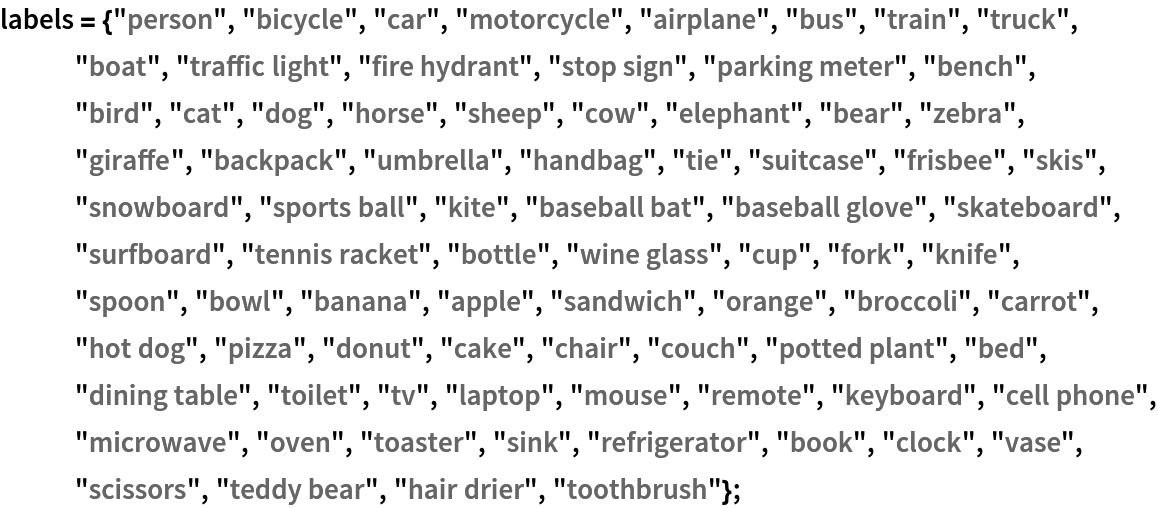

Define the label list for this model:

Write an evaluation function to scale the result to the input image size and suppress the least probable detections:

Basic usage

Obtain the detected bounding boxes with their corresponding classes and confidences for a given image:

Inspect which classes are detected:

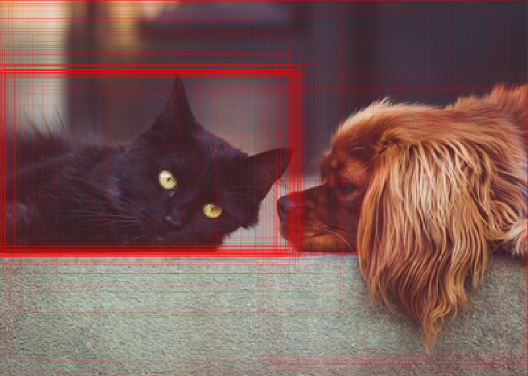

Visualize the detection:

Network result

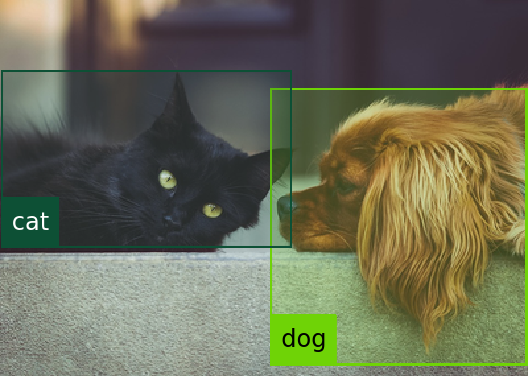

The network computes 12,804 bounding boxes and the probability that the objects in each box are of any given class:

Change coordinate system into a graphics domain:

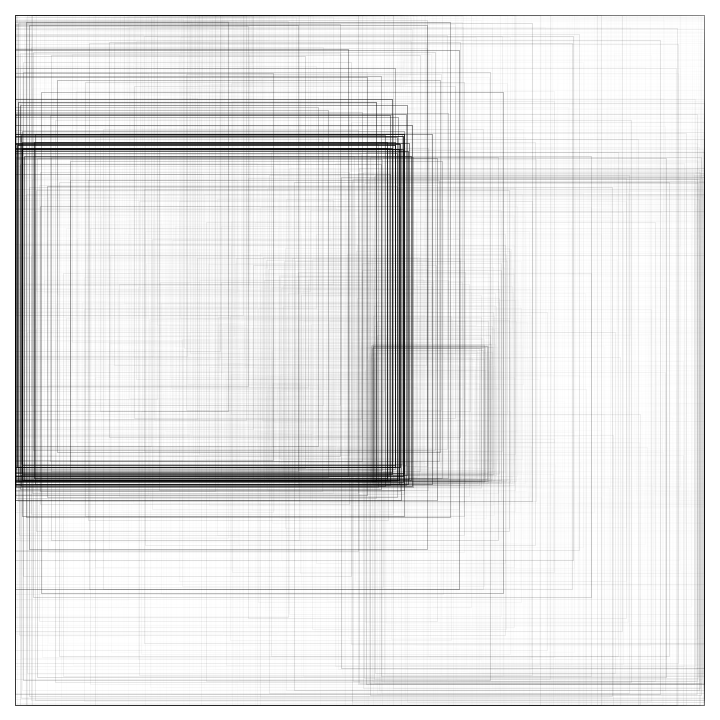

Visualize all the boxes predicted by the net scaled by their "objectness" measures:

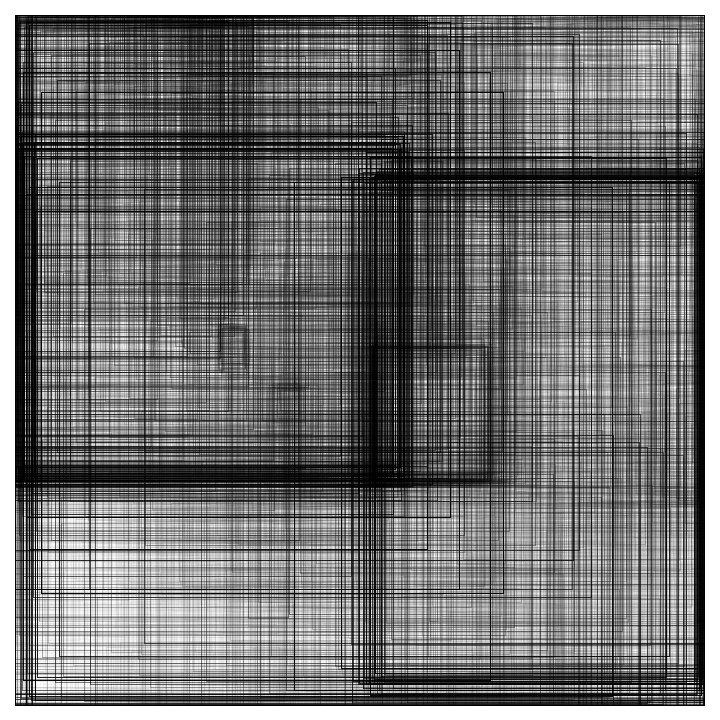

Visualize all the boxes scaled by the probability that they contain a cat:

Superimpose the cat prediction on top of the scaled input received by the net:

Net information

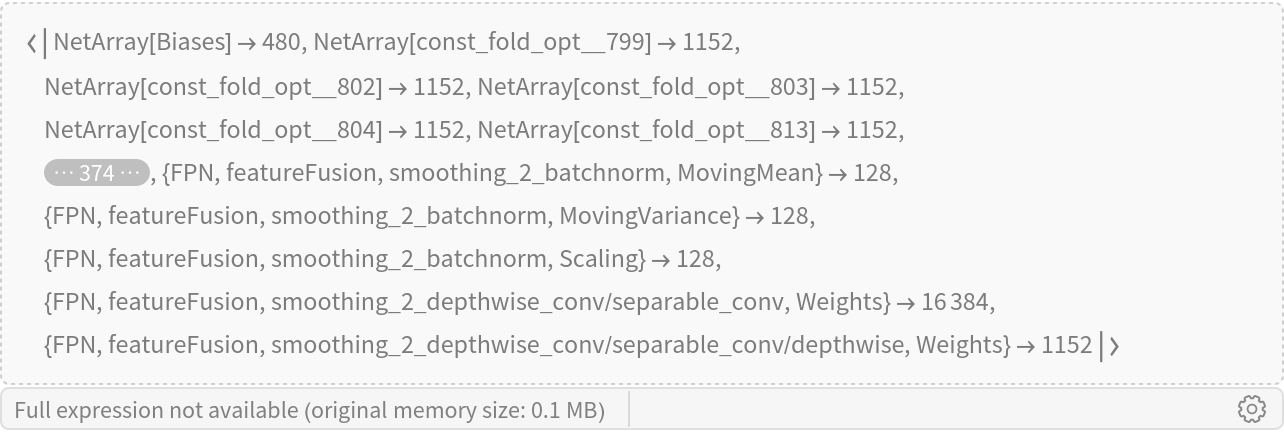

Inspect the number of parameters of all arrays in the net:

Obtain the total number of parameters:

Obtain the layer type counts:

Display the summary graphic:

Resource History

Reference

![netevaluate[model_, img_Image, detectionThreshold_ : .5, overlapThreshold_ : .45] := Module[

{netoutput, class2box, argMaxPerBox, maxPerBox, maxClasses, probableDetectionInd, probableClasses, probableScores, probableBoxes, nmsDetections, boxDecoder},

netoutput = model[img];

class2box = netoutput["ClassProb"];

(*Assuming there is one class per box*) argMaxPerBox = Flatten@Map[ Ordering[#, -1] &, class2box];

maxPerBox = Map[Max, class2box];

maxClasses = labels[[argMaxPerBox]];

(*Filter out the low score boxes*) probableDetectionInd = UnitStep[maxPerBox - detectionThreshold];

{probableClasses, probableScores, probableBoxes} = Map[Pick[#, probableDetectionInd, 1] &, {maxClasses, maxPerBox, netoutput["Boxes"]}];

(*Apply NMS*) nmsDetections = nonMaximumSuppression[

probableBoxes -> probableScores, {"Region", "Index"}, MaxOverlapFraction -> overlapThreshold];

boxDecoder[{a_, b_, c_, d_}, {w_, h_}] := Rectangle[{a*w, h - b*h}, {c*w, h - d*h}]; Map[{boxDecoder[ First[#], ImageDimensions[img]], probableClasses[[Last[#]]], probableScores[[Last[#]]]} &, nmsDetections]

]](https://www.wolframcloud.com/obj/resourcesystem/images/d7a/d7a23ba2-0072-4f60-a7e3-5f04144bc20b/165f83283cf7bc35.png)

![(* Evaluate this cell to get the example input *) CloudGet["https://www.wolframcloud.com/obj/99f0b348-a09f-487c-8dbe-4b4edc458178"]](https://www.wolframcloud.com/obj/resourcesystem/images/d7a/d7a23ba2-0072-4f60-a7e3-5f04144bc20b/2662d811d673195c.png)

![Graphics[

MapThread[{EdgeForm[Opacity[Total[#1]*0.5]], Rectangle @@ #2} &, {probabilities, rectangles}],

BaseStyle -> {FaceForm[], EdgeForm[{Thin, Black}]}

]](https://www.wolframcloud.com/obj/resourcesystem/images/d7a/d7a23ba2-0072-4f60-a7e3-5f04144bc20b/717de1f5fd2f7089.png)

![Graphics[

MapThread[{EdgeForm[Opacity[#1]], Rectangle @@ #2} &, {probabilities[[All, idx]], rectangles}],

BaseStyle -> {FaceForm[], EdgeForm[{Thin, Black}]}

]](https://www.wolframcloud.com/obj/resourcesystem/images/d7a/d7a23ba2-0072-4f60-a7e3-5f04144bc20b/2f404ccf8a22f187.png)

![HighlightImage[testImage, Graphics[MapThread[{EdgeForm[{Opacity[#1]}], Rectangle @@ (#2*{ImageDimensions[testImage], ImageDimensions[testImage]})} &, {probabilities[[All, idx]], rectangles}]], BaseStyle -> {FaceForm[], EdgeForm[{Thin, Red}]}]](https://www.wolframcloud.com/obj/resourcesystem/images/d7a/d7a23ba2-0072-4f60-a7e3-5f04144bc20b/1b29230c128c095a.png)