Resource retrieval

Get the pre-trained net:

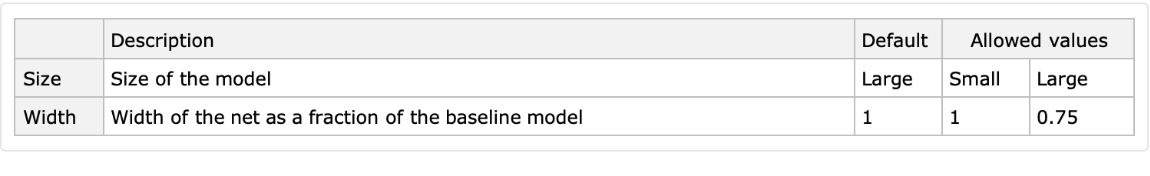

NetModel parameters

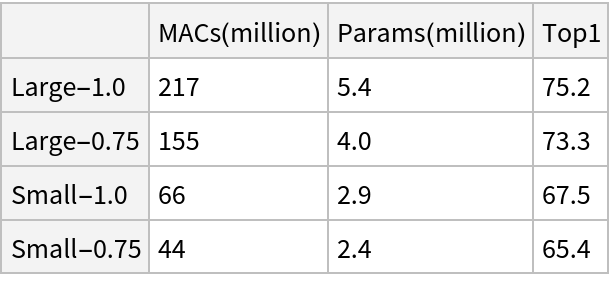

This model consists of a family of individual nets, each identified by a specific parameter combination. Inspect the available parameters:

Pick a non-default net by specifying the parameters:

Pick a non-default uninitialized net:

Basic usage

Classify an image:

The prediction is an Entity object, which can be queried:

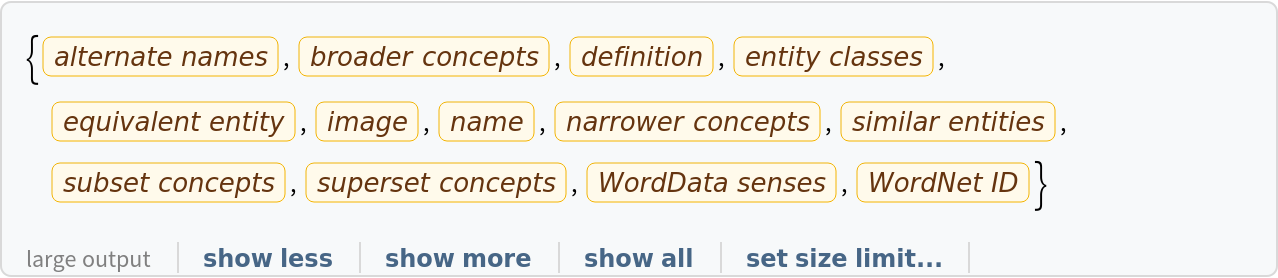

Get a list of available properties of the predicted Entity:

Obtain the probabilities of the 10 most likely entities predicted by the net:

An object outside the list of the ImageNet classes will be misidentified:

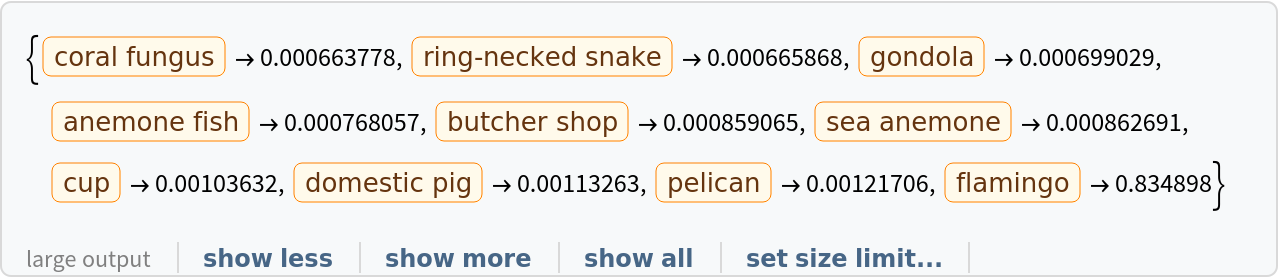

Obtain the list of names of all available classes:

Feature extraction

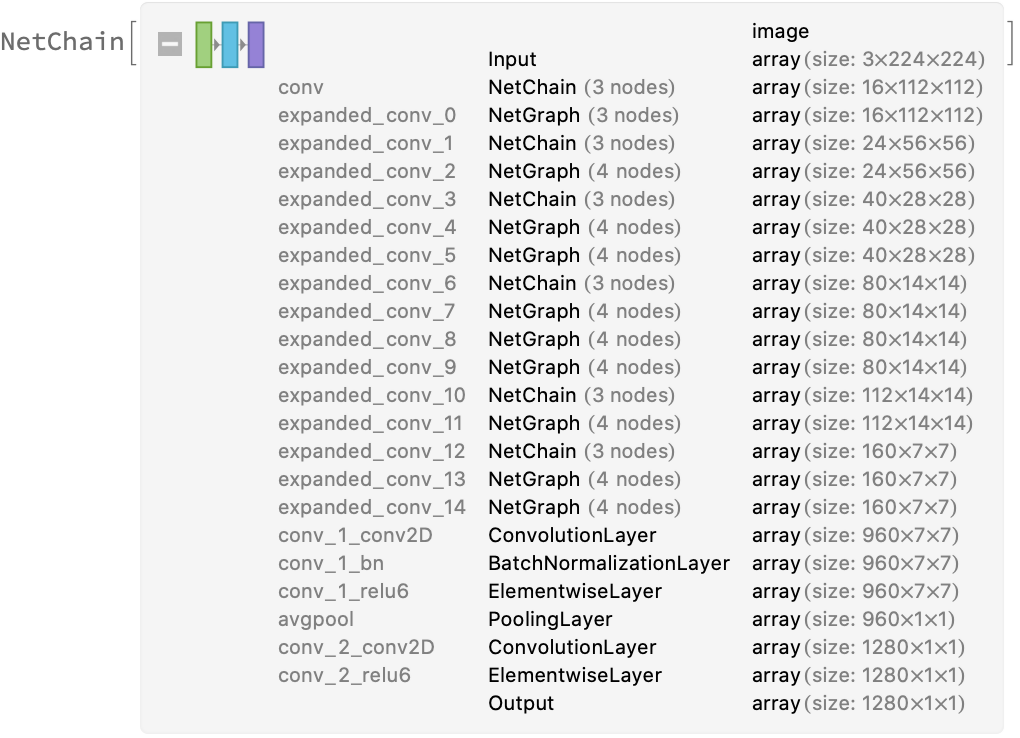

Remove the last layers of the trained net so that the net produces a vector representation of an image:

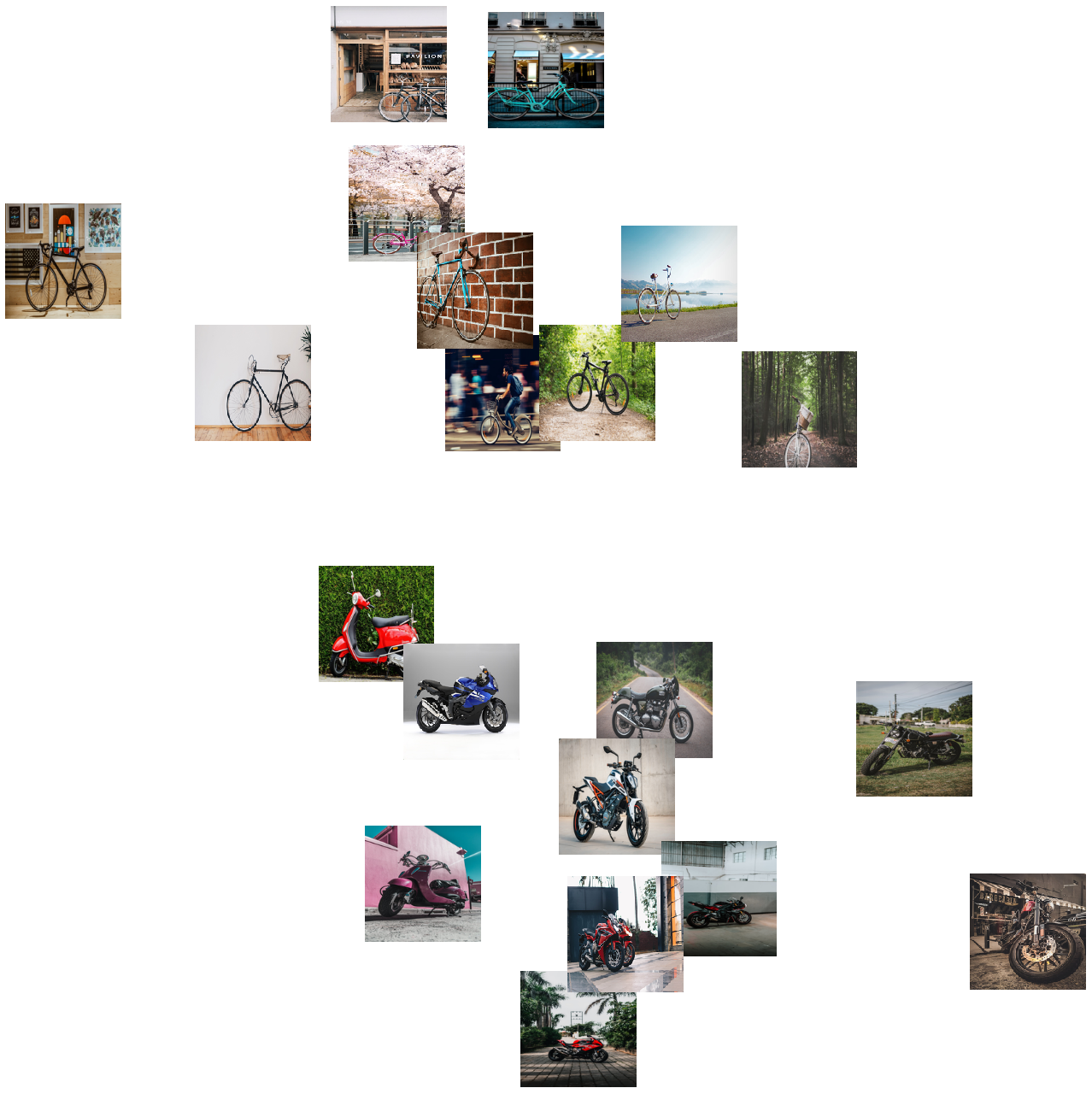

Get a set of images:

Visualize the features of a set of images:

Visualize convolutional weights

Extract the weights of the first convolutional layer in the trained net:

Show the dimensions of the weights:

Visualize the weights as a list of 16 images of size 3x3:

Transfer learning

Use the pre-trained model to build a classifier for telling apart images of motorcycles and bicycles. Create a test set and a training set:

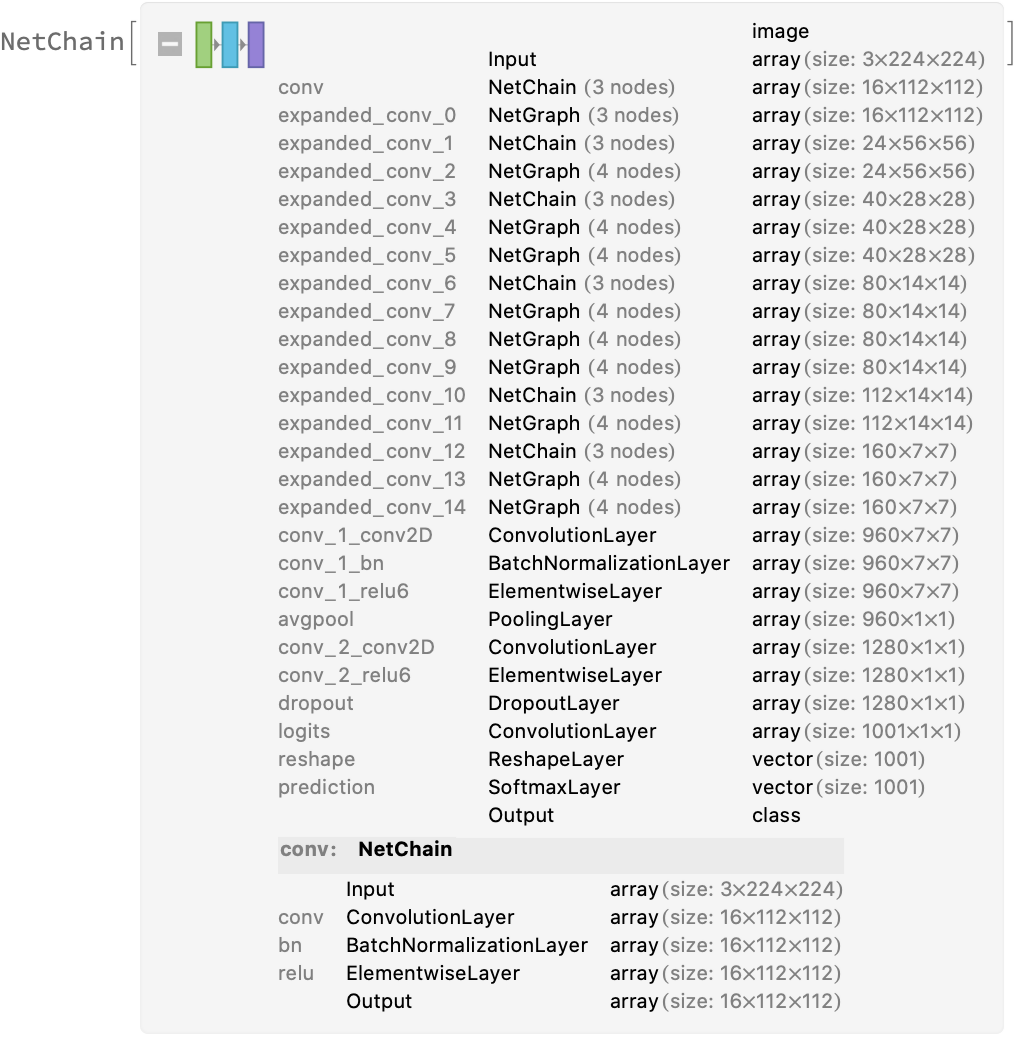

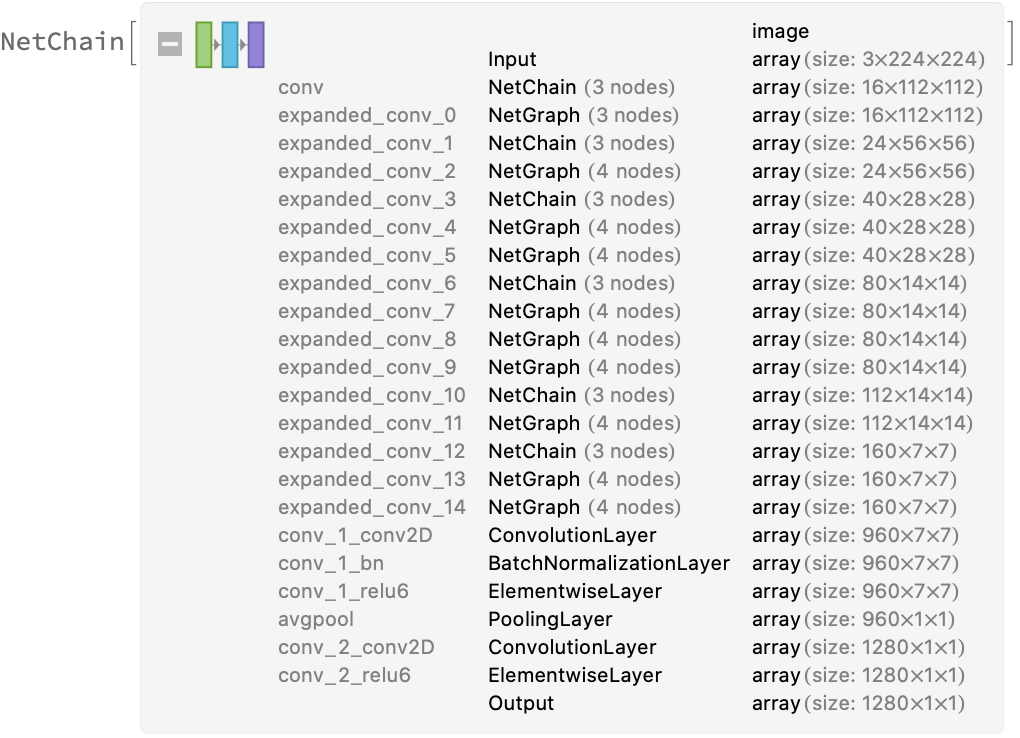

Remove the last layers from the pre-trained net:

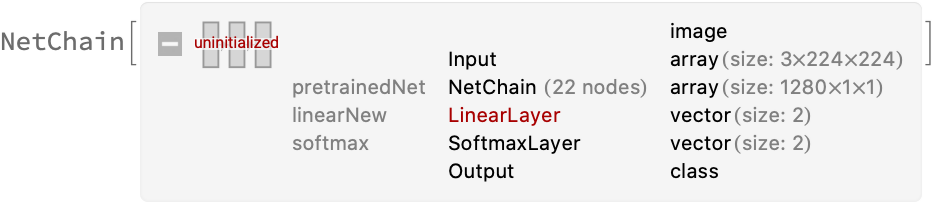

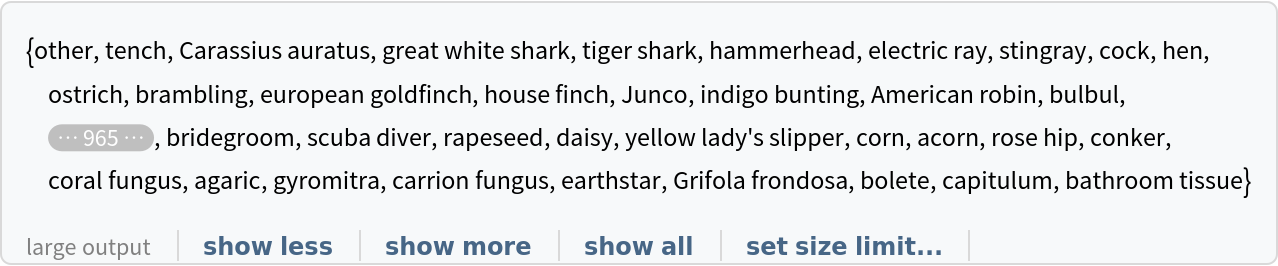

Create a new net composed of the pre-trained net followed by a linear layer and a softmax layer:

Train on the dataset, freezing all the weights except for those in the "linearNew" layer (use TargetDevice -> "GPU" for training on a GPU):

Perfect accuracy is obtained on the test set:

Net information

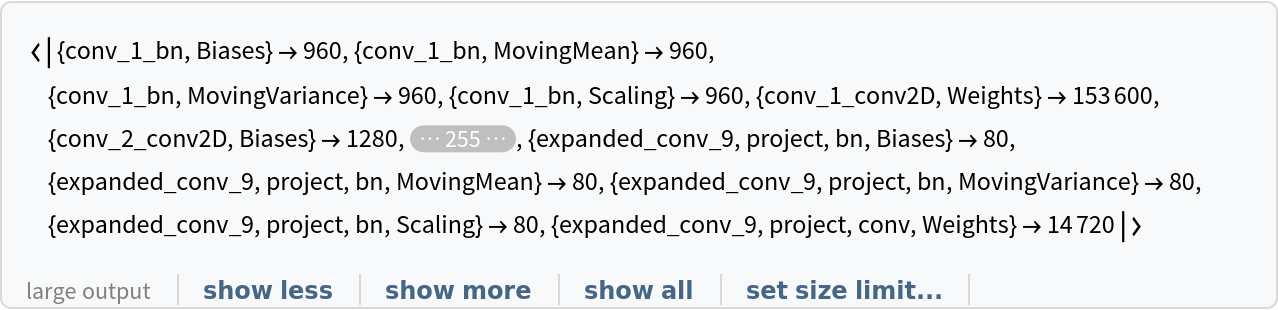

Inspect the number of parameters of all arrays in the net:

Obtain the total number of parameters:

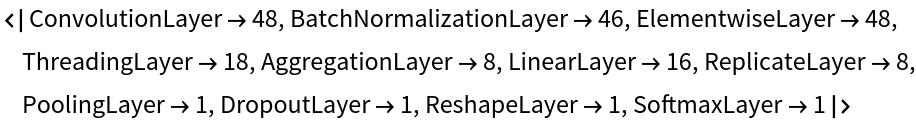

Obtain the layer type counts:

Export to MXNet

Export the net into a format that can be opened in MXNet:

Export also creates a net.params file containing parameters:

Get the size of the parameter file:

![(* Evaluate this cell to get the example input *) CloudGet["https://www.wolframcloud.com/obj/83b2c58a-9e96-4523-8f60-e941f286e2d0"]](https://www.wolframcloud.com/obj/resourcesystem/images/0bd/0bd54974-f5af-40bb-99a8-6fc03ac79aa6/5b9a4382a79659bd.png)

![(* Evaluate this cell to get the example input *) CloudGet["https://www.wolframcloud.com/obj/897c908a-b350-407a-b771-952d4d0e63b6"]](https://www.wolframcloud.com/obj/resourcesystem/images/0bd/0bd54974-f5af-40bb-99a8-6fc03ac79aa6/565bdcb0ba8cf586.png)

![(* Evaluate this cell to get the example input *) CloudGet["https://www.wolframcloud.com/obj/e8e51261-9188-405b-b25a-dfd32ff5da51"]](https://www.wolframcloud.com/obj/resourcesystem/images/0bd/0bd54974-f5af-40bb-99a8-6fc03ac79aa6/160a95e0b44dcee4.png)

![EntityValue[

NetExtract[

NetModel["MobileNet V3 Trained on ImageNet Competition Data"], "Output"][["Labels"]], "Name"]](https://www.wolframcloud.com/obj/resourcesystem/images/0bd/0bd54974-f5af-40bb-99a8-6fc03ac79aa6/7beebec98902c434.png)

![(* Evaluate this cell to get the example input *) CloudGet["https://www.wolframcloud.com/obj/b07b0266-1b5a-4822-ae6a-2aff7d0e0f66"]](https://www.wolframcloud.com/obj/resourcesystem/images/0bd/0bd54974-f5af-40bb-99a8-6fc03ac79aa6/2d4cc764c5e4b250.png)

![(* Evaluate this cell to get the example input *) CloudGet["https://www.wolframcloud.com/obj/aad043db-52ab-4113-90b6-ce5cbc546349"]](https://www.wolframcloud.com/obj/resourcesystem/images/0bd/0bd54974-f5af-40bb-99a8-6fc03ac79aa6/0169a85f6bc00b18.png)

![(* Evaluate this cell to get the example input *) CloudGet["https://www.wolframcloud.com/obj/b8489842-a063-4a25-beab-d84fbd667cdd"]](https://www.wolframcloud.com/obj/resourcesystem/images/0bd/0bd54974-f5af-40bb-99a8-6fc03ac79aa6/2e5b9dc5319c8155.png)

![newNet = NetChain[<|"pretrainedNet" -> tempNet, "linearNew" -> LinearLayer[], "softmax" -> SoftmaxLayer[]|>, "Output" -> NetDecoder[{"Class", {"bicycle", "motorcycle"}}]]](https://www.wolframcloud.com/obj/resourcesystem/images/0bd/0bd54974-f5af-40bb-99a8-6fc03ac79aa6/0e1411d9c2200e37.png)